Google's TurboQuant AI-compression algorithm can reduce LLM memory usage by 6x

TurboQuant makes AI models more efficient but doesn't reduce output quality like other methods.

Signal weather

Stable

The story has moved beyond the first headline and now acts as a reliable context anchor.

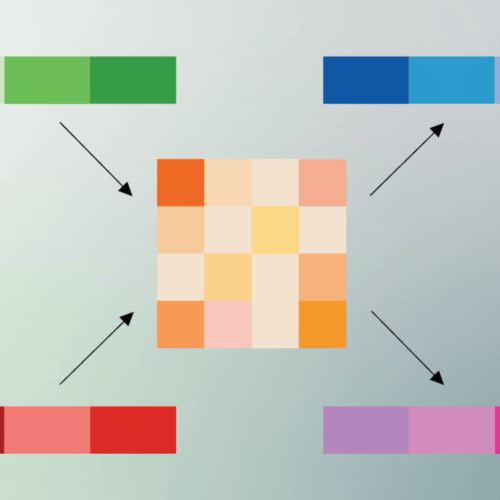

Even if you don't know much about the inner workings of generative AI models, you probably know they need a lot of memory. Hence, it is currently almost impossible to buy a measly stick of RAM without getting fleeced. Google Research recently revealed TurboQuant, a compression algorithm that reduces the memory footprint of large language models (LLMs) while also boosting speed and maintaining accuracy. TurboQuant is aimed at reducing the size of the key-value cache, which Google likens to a "digital cheat sheet" that stores important information so it doesn't have to be recomputed. This cheat sheet is necessary because, as we say all the time, LLMs don't actually know anything; they can do a good impression of knowing things through the use of vectors, which map the semantic meaning of tokenized text. When two vectors are similar, that means they have conceptual similarity. High-dimensional vectors, which can have hundreds or thousands of embeddings, may describe complex information like the pixels in an image or a large data set. They also occupy a lot of memory and inflate the size of the key-value cache, bottlenecking performance. To make models smaller and more efficient, developers employ quantization techniques to run them at lower precision. The drawback is that the outputs get worse—the quality of token estimation goes down. With TurboQuant, Google's early results show an 8x performance increase and 6x reduction in memory usage in some tests without a loss of quality. Read full article Comments

Stay on the signal

Follow Google's TurboQuant AI-compression algorithm can reduce LLM memory usage by 6x

Follow this story beyond a single article: new follow-ups, adjacent sources, and the evolving storyline.

Story map

Understand this topic fast

A quick entry into the story: why it matters now, who is involved, and where to go next for context.

Why it matters now

Topic constellation

Open the live map for this story

See which entities, story threads, sources, and follow-up articles shape this story right now.

Click nodes to continue

Entity pages

Story timeline

Continue with this story

A short sequence of events and follow-up stories to understand the arc quickly.

How reliable this looks

Signal and trust for Ars Technica

This source works at a rapid pace: 100% of recent stories land in the hot window, and 0% carry visible search signal.

Reliability

92

Freshness

100

Sources in storyline

3

Related articles

More stories that share tags, source, or category context.

GrapheneOS fixes Android VPN leak Google refused to patch

Comments

Signal weather

Momentum is building quickly, so this card is a good early entry point into the topic.

Why now

Fresh coverage with immediate momentum.

The new Wild West of AI kids’ toys

These connected companions could disrupt everything from make-believe to bedtime stories. No wonder some lawmakers want them banned.

Signal weather

Momentum is building quickly, so this card is a good early entry point into the topic.

Why now

Fresh coverage with immediate momentum.

Сначала пугали Huawei. Теперь в прицеле Google и Microsoft. В Евросоюзе боятся превратиться в «технологическую колонию» США

ЕС встал перед выбором — цифровая колония или технологический суверенитет.

Signal weather

Momentum is building quickly, so this card is a good early entry point into the topic.

Why now

Fresh coverage with immediate momentum.

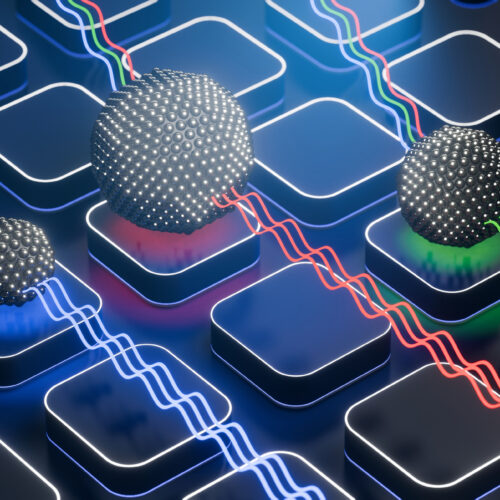

Manufacturing qubits that can move

It's hard to mix electronic manufacturing and flexible geometry.

Signal weather

Momentum is building quickly, so this card is a good early entry point into the topic.

Why now

Fresh coverage with immediate momentum.

More from Ars Technica

Fresh reporting and follow-up coverage from the same newsroom.

The new Wild West of AI kids’ toys

These connected companions could disrupt everything from make-believe to bedtime stories. No wonder some lawmakers want them banned.

Signal weather

Momentum is building quickly, so this card is a good early entry point into the topic.

Why now

Fresh coverage with immediate momentum.

Manufacturing qubits that can move

It's hard to mix electronic manufacturing and flexible geometry.

Signal weather

Momentum is building quickly, so this card is a good early entry point into the topic.

Why now

Fresh coverage with immediate momentum.

Trump reportedly plans to fire FDA Commissioner Marty Makary

The plan isn't final and could change, but his ouster would be no surprise.

Signal weather

Momentum is building quickly, so this card is a good early entry point into the topic.

Why now

Fresh coverage with immediate momentum.

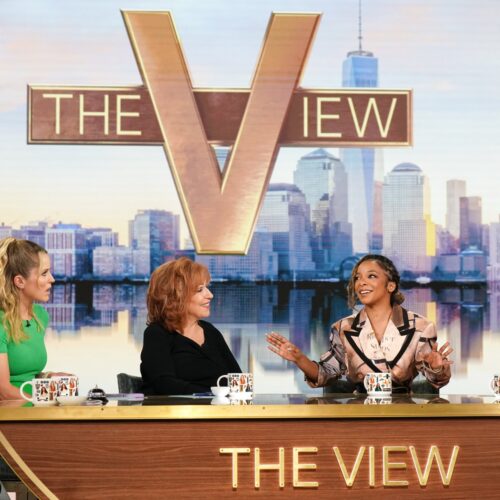

ABC refuses to capitulate to Trump admin, fights FCC probe into The View

FCC chair hasn't been able to bully ABC and owner Disney into submission.

Signal weather

Momentum is building quickly, so this card is a good early entry point into the topic.

Why now

Fresh coverage with immediate momentum.