Running local models on Macs gets faster with Ollama's MLX support

Apple Silicon Macs get a performance boost thanks to better unified memory usage.

Signal weather

Stable

The story has moved beyond the first headline and now acts as a reliable context anchor.

Ollama, a runtime system for operating large language models on a local computer, has introduced support for Apple's open source MLX framework for machine learning. Additionally, Ollama says it has improved caching performance and now supports Nvidia's NVFP4 format for model compression, making for much more efficient memory usage in certain models. Combined, these developments promise significantly improved performance on Macs with Apple Silicon chips (M1 or later)—and the timing couldn't be better, as local models are starting to gain steam in ways they haven't before outside researcher and hobbyist communities. The recent runaway success of OpenClaw—which raced its way to over 300,000 stars on GitHub, made headlines with experiments like Moltbook and became an obsession in China in particular—has many people experimenting with running models on their machines. Read full article Comments

Stay on the signal

Follow Running local models on Macs gets faster with Ollama's MLX support

Follow this story beyond a single article: new follow-ups, adjacent sources, and the evolving storyline.

Story map

Understand this topic fast

A quick entry into the story: why it matters now, who is involved, and where to go next for context.

Why it matters now

Topic constellation

Open the live map for this story

See which entities, story threads, sources, and follow-up articles shape this story right now.

Click nodes to continue

Entity pages

Story threads

Story timeline

Continue with this story

A short sequence of events and follow-up stories to understand the arc quickly.

How reliable this looks

Signal and trust for Ars Technica

This source works at a rapid pace: 100% of recent stories land in the hot window, and 0% carry visible search signal.

Reliability

92

Freshness

100

Sources in storyline

1

Related articles

More stories that share tags, source, or category context.

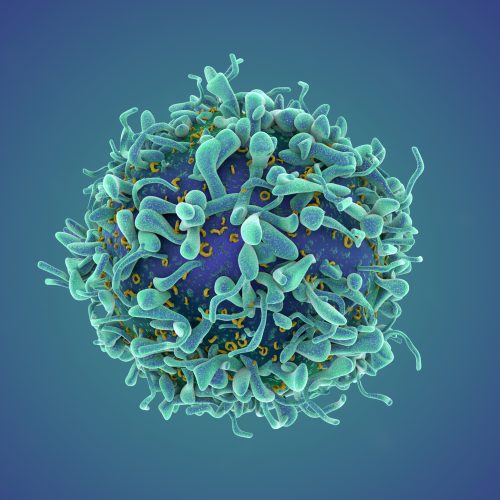

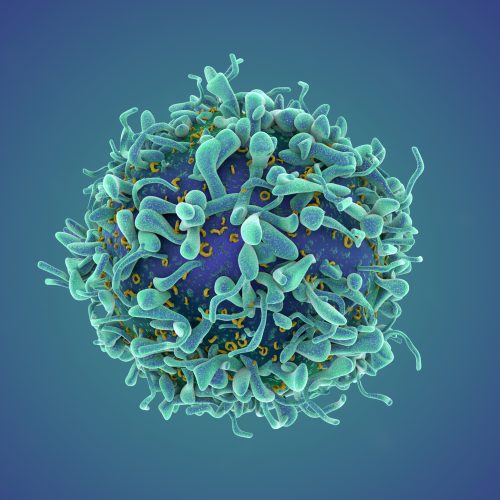

A revolutionary cancer treatment could transform autoimmune disease

Researchers are testing CAR T cell therapy as a way to reset the immune system.

Signal weather

Momentum is building quickly, so this card is a good early entry point into the topic.

Why now

Fresh coverage with immediate momentum.

The US is betting on AI to catch insider trading in prediction markets

The Commodity Futures Trading Commission wants us to know it's taking this very seriously.

Signal weather

Momentum is building quickly, so this card is a good early entry point into the topic.

Why now

Fresh coverage with immediate momentum.

Russia pressures university students to become wartime drone pilots

Universities promise no frontline duty and perks if students enlist in military.

Signal weather

Momentum is building quickly, so this card is a good early entry point into the topic.

Why now

Fresh coverage with immediate momentum.

Anthropic’s $1.5B copyright settlement is getting messy as judge delays approval

Lawyers accused of rushing historic settlement to seize $320 million in fees.

Signal weather

Momentum is building quickly, so this card is a good early entry point into the topic.

Why now

Fresh coverage with immediate momentum.

More from Ars Technica

Fresh reporting and follow-up coverage from the same newsroom.

A revolutionary cancer treatment could transform autoimmune disease

Researchers are testing CAR T cell therapy as a way to reset the immune system.

Signal weather

Momentum is building quickly, so this card is a good early entry point into the topic.

Why now

Fresh coverage with immediate momentum.

The US is betting on AI to catch insider trading in prediction markets

The Commodity Futures Trading Commission wants us to know it's taking this very seriously.

Signal weather

Momentum is building quickly, so this card is a good early entry point into the topic.

Why now

Fresh coverage with immediate momentum.

Russia pressures university students to become wartime drone pilots

Universities promise no frontline duty and perks if students enlist in military.

Signal weather

Momentum is building quickly, so this card is a good early entry point into the topic.

Why now

Fresh coverage with immediate momentum.

Anthropic’s $1.5B copyright settlement is getting messy as judge delays approval

Lawyers accused of rushing historic settlement to seize $320 million in fees.

Signal weather

Momentum is building quickly, so this card is a good early entry point into the topic.

Why now

Fresh coverage with immediate momentum.